Ingress NGINX is Retiring: Here’s How to Migrate on AKS

March 2026 came and went, and Ingress NGINX went with it. At KubeCon EU in Amsterdam, where we were on the ground, the community kubernetes/ingress-nginx controller was officially archived live on stage. If you have been running it in production and have not yet started planning your migration, now is the time.

There is no single right answer for every AKS setup. The right migration path depends on what WAF story you need, how much operational overhead you are willing to own, and what is already sitting in front of your cluster. This post gives you the facts on four credible options, Traefik, Cilium, Application Gateway for Containers, and the AKS Application Routing add-on; an honest comparison.

TOC

- The Clock Has Run Out: What Happened and What It Means

- Gateway API: The Successor, Not a Drop-in Replacement

- Option: Traefik — Self-Hosted, Maximum Flexibility

- Option: Cilium — If You Own Your Network Stack

- Option: Application Gateway for Containers

- Option: AKS Application Routing Add-on

- Don’t Only Look Inside the Cluster

- Decision Matrix

- Our Recommendation by Scenario

- Wrapping up

The Clock Has Run Out: What Happened and What It Means

Let’s be precise about what has actually changed, because there is real confusion in the field about which “NGINX on AKS” is affected. Three separate things use the name, and only one of them has reached the end of life:

| What | Who | Status |

|---|---|---|

kubernetes/ingress-nginx (upstream community) | SIG-Network | EOL March 2026 — GitHub read-only, no patches |

| AKS Application Routing add-on (Microsoft-managed NGINX) | Microsoft | Critical security patches through November 2026 |

F5 NGINX Ingress Controller (nginxinc/kubernetes-ingress) | F5 | Actively maintained, unaffected |

The community controller is done. The repos are read-only. There will be no new CVE patches, no compatibility fixes for upcoming Kubernetes versions, and no new releases. The Kubernetes contributors’ blog warned that the controller may continue to function in the near future, but is likely to break with future Kubernetes versions. Every vulnerability discovered from March 2026 onward is a permanent, unpatched hole in any cluster still running it unmanaged.

If you are running the AKS Application Routing add-on, Microsoft has committed to critical security patches through November 2026, giving you a meaningful runway beyond the upstream EOL. That runway is finite, though, and the Istio-powered Gateway API path Microsoft is building to replace it is not yet GA. Use the time to plan, not to wait indefinitely.

One clarification that matters: The Kubernetes Ingress API itself is not deprecated. Only this specific controller is retiring. You can continue using

Ingressresources with any other conformant controller without rewriting your configuration. The API is stable, and every option we cover in this post supports it.

Gateway API: The Successor, Not a Drop-in Replacement

The ecosystem has converged on Gateway API as the long-term successor to the Ingress API. Before choosing a controller, it is worth understanding what Gateway API actually is, what it does better, and where it genuinely adds complexity.

What is Gateway API?

Gateway API reached GA with v1.0 in October 2023. The current stable release is v1.5 (February 2026), which graduated TLSRoute and ListenerSets to stable. The API lives under gateway.networking.k8s.io and is maintained by SIG-Network with a dedicated conformance test suite.

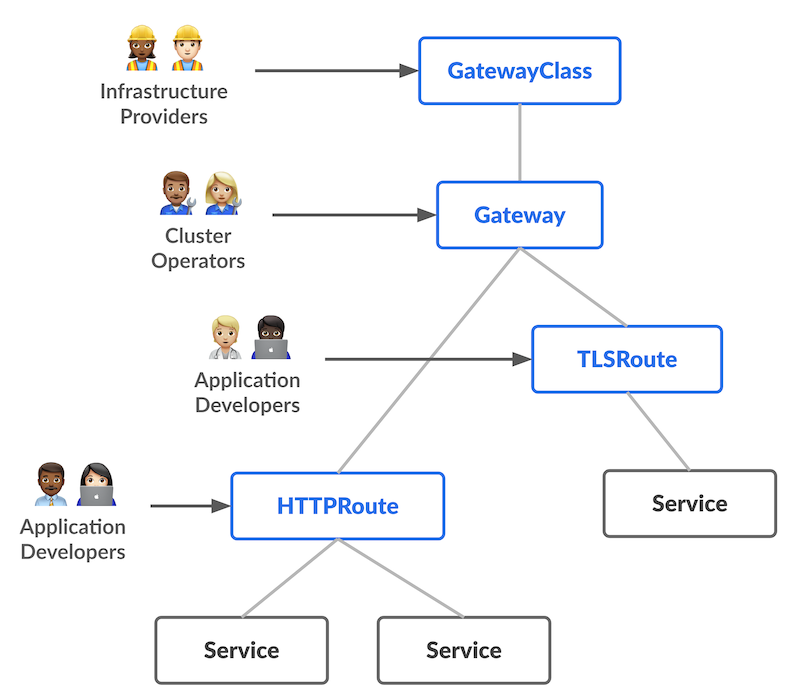

The fundamental shift is a role-oriented resource model that separates concerns, which Ingress conflated into one object:

| Resource | Persona | Responsibility |

|---|---|---|

GatewayClass | Infrastructure/platform team | Defines the controller implementation |

Gateway | Cluster operator | Listeners, ports, TLS, allowed namespaces |

HTTPRoute / GRPCRoute / TLSRoute | App developer | Matching, routing, backend references |

ReferenceGrant | Resource owner | Explicit cross-namespace access control |

What is genuinely better

The multi-tenancy story is the biggest practical improvement. Application teams own their HTTPRoute resources in their own namespaces. The platform team owns Gateway resources and controls which namespaces can attach routes. Route changes no longer require cluster-admin access or touching a shared ingress resource. This is the model most platform teams want but have been bolting on top of Ingress with fragile RBAC and annotation policies.

Advanced routing capabilities that previously required vendor-specific annotations: header-based routing, weighted traffic splits, request mirroring, URL rewrites, retries, and timeouts are first-class fields in Gateway API.

Typed protocol support matters too. HTTP, gRPC, TCP, and TLS each have dedicated route resources instead of being pushed through the same Ingress object with different annotations. The extensibility model, ExtensionRef filters and the policy attachment pattern allows vendors to add capabilities without breaking the portability of Standard channel resources.

What is genuinely harder

We want to be honest here. With great flexibility comes more complexity.

At minimum, you now manage three resources (GatewayClass, Gateway, HTTPRoute) where one Ingress resource previously handled everything.

TLS certificates move from the route level to the Gateway listener, which means the platform team and application teams need to coordinate on certificate lifecycle in a way Ingress never required. A HTTPRoute with a mismatched hostname does not produce an error. It is silently ignored and only visible via status conditions. Compared to NGINX’s admission webhook rejections, this is a debugging step backwards.

Policy standardization is incomplete. Rate limiting, authentication, and circuit breaking remain largely implementation-specific. If you depend on those features today through NGINX annotations, the equivalent in Gateway API will be vendor-specific until the broader policy SIG work matures.

The migration tool: ingress2gateway

The official ingress2gateway tool reached v1.0 in March 2026. It supports 30+ ingress-nginx annotations, including rewrites, CORS, canary, session affinity, backend TLS, regex matching, and rate-limit configuration. It produces Gateway API manifests from your existing Ingress resources and is genuinely useful as a starting point.

# Generate Gateway API manifests from existing Ingress resources

ingress2gateway print --namespace my-appTreat it as a migration assistant, not a one-shot converter. The output requires review, and complex Lua or snippet-based logic needs manual translation.

Both APIs remain valid

Gateway API is where the ecosystem is going, but that does not mean everything must migrate today. Our recommendation: Keep Ingress for stable workloads that are functioning correctly and would cost significant effort to rewrite; adopt Gateway API for new workloads or wherever you need advanced routing, multi-tenancy, or protocol flexibility. Every controller we cover in this post supports both APIs, with the exception of AGC, which de-emphasizes the Ingress path and recommends Gateway API as the primary interface.

Option: Traefik — Self-Hosted, Maximum Flexibility

Traefik is our recommendation for teams that want the fastest path from ingress-nginx without giving up control or locking into an Azure-specific solution.

The standout capability for migrations is Traefik’s kubernetes-ingress-nginx provider: a compatibility layer that reads your existing nginx.ingress.kubernetes.io/* annotations directly and maps them to Traefik routing configuration. You do not need to rewrite your Ingress manifests before switching controllers. This is unique to Traefik among the options in this post, and it means you can run Traefik alongside ingress-nginx, point test workloads at the new controller, validate behavior, and then shift traffic gradually, namespace by namespace, without a big-bang cutover.

On the Gateway API side, Traefik v3.7+ implements Gateway API v1.5.1, the highest conformance level of any option in this post. The full Standard channel is supported: HTTPRoute, GRPCRoute, TLSRoute, and BackendTLSPolicy. Ingress and Gateway API can run simultaneously through the same Traefik instance, so your migration can be as incremental as you want.

Deployment on AKS is straightforward: Helm chart, standard, and Azure Standard Load Balancer Service. No special AKS prerequisites, no preview features required. It runs on any AKS configuration, including AKS Automatic.

For TLS, cert-manager v1.16+ supports Gateway API natively via the cert-manager.io/issuer annotation on the Gateway resource. Both HTTP-01 and DNS-01 (via Azure DNS) work without workarounds. There is no native Azure Key Vault integration in Traefik, but the standard combination of cert-manager and the Secrets Store CSI driver covers that gap.

The main trade-off is WAF. Traefik OSS has no built-in WAF. You need to place Azure Front Door or Application Gateway v2 with WAF upstream of the cluster or move to Traefik Hub for the commercial WAF integration. This is not necessarily a problem, in fact, for most multi-service platforms, it is the correct architecture — but it is a conscious decision rather than an add-on checkbox.

Traefik is a CNCF Incubating project with active development and commercial support from Traefik Labs. The middleware ecosystem covers retry, circuit breaking, rate limiting, and authentication. Native Prometheus metrics and OpenTelemetry traces integrate naturally into Azure Monitor and Grafana.

Option: Cilium — If You Own Your Network Stack

Cilium is the right choice for teams building a greenfield cluster with a strong preference for eBPF-powered networking end-to-end and the operational maturity to manage their own CNI.

Cilium combines CNI, kube-proxy replacement, service mesh, and Gateway API controller in a single stack. The data plane uses eBPF for L3/L4 processing and an embedded Envoy proxy for L7, which means traffic is handled at the kernel level without the overhead of a separate proxy process. Hubble provides native L7 observability on every Gateway API flow without sidecars or additional agents. Cilium is a CNCF Graduated project with Isovalent (now Cisco) backing. Gateway API conformance sits at v1.4.0 with Cilium 1.19+.

There is a critical caveat for AKS that must be stated: Azure CNI Powered by Cilium — the managed Cilium mode on AKS — does not expose the Gateway API controller. Microsoft controls the Cilium configuration in managed mode and does not allow

gatewayAPI.enabled=true. GitHub issues #5290 and #5444 track this gap, and there is no public ETA from Microsoft.

To use Cilium as a Gateway API controller on AKS, you must use BYO CNI (--network-plugin none), self-install Cilium with kubeProxyReplacement=true and gatewayAPI.enabled=true, and enable the AKS preview feature to disable kube-proxy. This gives you a fully functional Cilium Gateway API setup, but it puts full responsibility for CNI upgrades, Kubernetes version compatibility, and node pool OS image alignment on your team. AKS Automatic clusters are not supported in this configuration.

This is not a minor operational consideration. Your entire network stack becomes self-managed. For teams that are prepared for that and want the unified eBPF networking and observability story, Cilium is the most powerful option. For teams that want managed infrastructure, the operational overhead is difficult to justify.

Option: Application Gateway for Containers

Application Gateway for Containers (AGC) is Azure’s managed, Gateway API-native load balancer for AKS, generally available since February 2024. It is the architectural successor to the legacy Application Gateway Ingress Controller and takes a fundamentally different approach: the load balancer lives outside the cluster as an Azure resource, controlled by the ALB Controller running inside AKS (two pods, authenticated via Workload Identity).

AGC supports both the Ingress API and Gateway API, but Gateway API is clearly the recommended path. Ingress support is included for migration compatibility, but it is more limited and carries constraints that Gateway API does not. If you choose AGC, plan to migrate to Gateway API. Conformance covers the Standard channel v1: GatewayClass, Gateway, HTTPRoute, and ReferenceGrant. ListenerSets from Gateway API v1.5 and GRPCRoute and TLSRoute are not yet supported.

Web Application Firewall (WAF)

WAF support is a genuine strength of AGC. It is configured via the SecurityPolicy CRD, which maps to an AzureWebApplicationFirewallPolicy. A single AGC resource can reference multiple security policies, and WAF can be scoped per frontend. The cost impact is real: WAF roughly doubles all pricing meters. Multi-cluster deployments multiply this, since each cluster requires its own ALB Controller with a dedicated managed identity.

The cert-manager / Let’s Encrypt FQDN problem

This is an operational pitfall that is not obvious until you hit it, so we want to call it out explicitly.

Each AGC frontend receives a unique, auto-generated FQDN in the format fe-<random-chars>.alb.azure.com. When cert-manager creates a temporary Ingress resource to serve an HTTP-01 ACME challenge, the ALB Controller interprets that new Ingress as a request for a new frontend and provisions one with a different FQDN. Let’s Encrypt tries to validate domain ownership on the original domain, cannot reach the challenge endpoint, and the certificate issuance fails. This behavior is tracked in the cert-manager community and is a known limitation of AGC’s per-Ingress frontend model.

The workaround is to explicitly pin the frontend name in the cert-manager ClusterIssuer solver ingressTemplate using the alb.networking.azure.io/alb-frontend annotation. This forces cert-manager’s challenge Ingress onto the same frontend as your main workload, and the HTTP-01 challenge resolves correctly. Microsoft’s cert-manager guide for AGC documents the Gateway API path. DNS-01 challenges via Azure DNS avoid the problem entirely and are the cleaner approach for production deployments.

A second TLS constraint: Azure Key Vault integration via the Secrets Store CSI driver is not supported for AGC TLS. Certificates must exist as Kubernetes Secrets directly in the cluster. If Azure Key Vault is central to your certificate management, this is a meaningful limitation compared to the App Routing add-on.

Other limitations worth knowing

A few limitations to keep in mind:

- AGC is currently public-only: private frontend support has been announced but is not yet GA

- Only one association (delegated subnet) per AGC resource is supported

- The service is not available in all Azure regions, so check the supported regions list before designing around it

- AKS Automatic is currently in preview

Option: AKS Application Routing Add-on

The Application Routing add-on is Microsoft’s managed NGINX offering for AKS, and it is the lowest-friction migration path for anyone currently running self-managed ingress-nginx.

Mechanically, the add-on deploys a managed ingress-nginx instance in the app-routing-system namespace and registers the IngressClass webapprouting.kubernetes.azure.com. Migrating from self-managed ingress-nginx is largely a matter of updating ingressClassName your existing Ingress resources. Most manifests work unchanged.

The built-in Azure integrations are the strongest argument for this option over self-managed Traefik. Azure DNS support (public and private zones via external-dns) is configured through the NginxIngressController CRD. Azure Key Vault TLS is native via the Secrets Store CSI driver — without the FQDN complications that affect AGC.

There are restrictions: snippet annotations are blocked for security reasons, and the ingress-nginx ConfigMap is not directly editable. These are unlikely to be blockers for most teams, but teams with complex custom NGINX configurations should verify compatibility before committing.

Microsoft has committed to critical security patches through November 2026, which gives users of the managed add-on meaningfully more runway than the upstream EOL.

The roadmap matters here. Application Routing with Gateway API, powered by a lightweight Istio control plane (ingress-only — no sidecar injection, no full service mesh), entered Public Preview in March 2026. One important caveat for the preview: Azure DNS and Azure Key Vault TLS certificate management — which work natively in the NGINX-based add-on today — are not yet supported in the Gateway API mode.

If your requirements fit within its boundaries today, it is the most pragmatic choice on the list. But it simplicity might limit you in the future.

Don’t Only Look Inside the Cluster

When teams plan an ingress migration, attention naturally focuses on the Kubernetes layer. But if your platform serves traffic across AKS, Azure Container Apps, Azure Web Apps, or Azure Functions, or if you run more than one cluster, the in-cluster controller is only one part of the picture.

Azure Front Door and Azure Application Gateway v2 with WAF sit in front of all of these services, regardless of what runs inside the cluster. If you already have either in place, the WAF question is already answered at the platform layer. Adding AGC with its integrated WAF on top means paying twice for overlapping capability. In that case, a simpler, lower-cost in-cluster option like Traefik or the App Routing add-on is the right call, and WAF responsibility stays at the outer layer where it belongs and can protect all services uniformly.

The reverse argument is equally valid: if you are AKS-only and do not have an outer WAF layer, AGC’s integrated WAF is a strong reason to choose it over the self-managed alternatives.

The point is straightforward — do not design your ingress strategy in isolation from your broader Azure network topology. The right controller for your AKS cluster depends on what is already in front of it.

Decision Matrix

| Criterion | Traefik | Cilium GW | App Gateway for Containers | App Routing |

|---|---|---|---|---|

| Migration friction from ingress-nginx | 🟢 Lowest | 🟡 Medium | 🟡 Medium | 🟢 Lowest |

| Gateway API conformance | v1.5.1 (latest) | v1.4.0 | v1 Standard | In Preview |

| WAF | Third-party | External only | ✅ Built-in | External only |

| Private workloads | ✅ | ✅ | ❌ (public only) | ✅ |

| AKS Automatic compatible | ✅ | ❌ | ❌ | ✅ |

| Operational model | Self-managed | Self-managed (BYO CNI) | Managed Azure resource | Managed AKS add-on |

| Multi-tenancy | ✅ | ✅ | ✅ (per-cluster limit) | ✅ (NginxIngressController CRD) |

| Community / support | CNCF Incubating | CNCF Graduated | Microsoft SLA | Microsoft SLA |

Our Recommendation by Scenario

- “I need the fastest way off self-managed ingress-nginx”: Traefik. Use the NGINX annotation compatibility provider to run both controllers in parallel, validate workload by workload, and migrate at your own pace without rewriting manifests upfront.

- “We’re all-in on Azure, need integrated WAF, and want Gateway API now”: Application Gateway for Containers. Accept the cost, use DNS-01 with Azure DNS for cert-manager in production to avoid the FQDN issue, and plan for a per-cluster managed identity setup from the start.

- “We already have Front Door or App Gateway v2 with WAF in front of the cluster”: Traefik or App Routing. Keep WAF at the outer layer. Do not pay twice.

- “We’re building a greenfield cluster and want eBPF networking end-to-end”: Cilium. This assumes a team that is prepared to run BYO CNI and manage the CNI lifecycle on AKS independently.

- “Minimum change, fully managed, waiting for Gateway API”: App Routing add-on. You are covered with critical security patches until November 2026, and Microsoft is actively building the Istio-powered Gateway API path. Watch the AKS roadmap and plan for a second migration when it ships.

Wrapping Up

The retirement of ingress-nginx is not a crisis. The ecosystem has had years to mature alternatives, and the options available today on AKS are solid. The decision comes down to three things: whether you need WAF integrated at the cluster level or can place it upstream, whether you want managed Azure infrastructure or self-managed OSS, and what your cost tolerance per cluster looks like.

If you want support working through that decision for your specific platform setup, our Platform Engineering team can help you evaluate the right fit. Reach out here.