Dapr – The Future of Cloud-Native development is here

Dapr v1.0.0 is here! To be precise, it was released tonight and is now considered ready for use in production workloads. In fact, there are already companies that are successfully using Dapr in production. So why is this exciting? Read on to find out how Dapr can help you be more productive when developing distributed systems such as microservices.

.

Why is Dapr relevant to you?

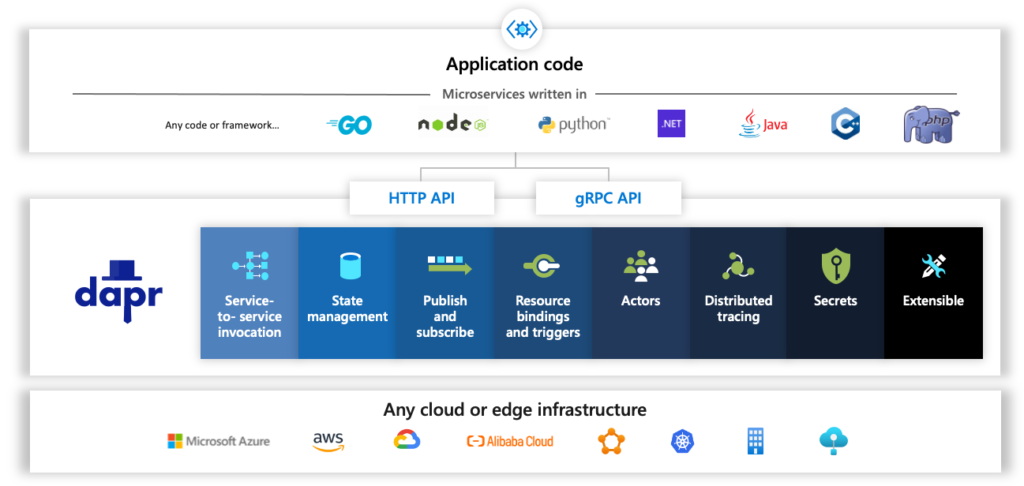

It is a novel application runtime, which does not limit developers to using a particular programming language, framework, or environment. This allows cloud-native developers to truly embrace the polyglot nature of microservices. Furthermore, it is specifically aimed at simplifying developing distributed applications. A task that is commonly described as hard and error-prone. With Dapr this task becomes a lot easier. Possible use cases for Dapr are:

- Polyglot microservice architectures or applications

- Migrating a monolith to microservices step-by-step using Dapr building blocks

- Developing and running applications in different environments, e.g., local and cloud

- Multi-cloud scenarios

- When building blocks provide functions that are needed in the application

.

What is Dapr?

Dapr, the Distributed Application Runtime, is a project initiated and announced by Microsoft on October 16, 2019. However, Dapr is not owned by Microsoft and is being transitioned to an open governance model. Hence, it is an open-source project that is publicly developed on GitHub.

Dapr helps developers build event-driven, resilient distributed applications. Whether on-premises, in the cloud, or on an edge device, Dapr helps you tackle the challenges that come with building microservices and keeps your code platform agnostic.

Dapr – portable, event-driven, serverless runtime.

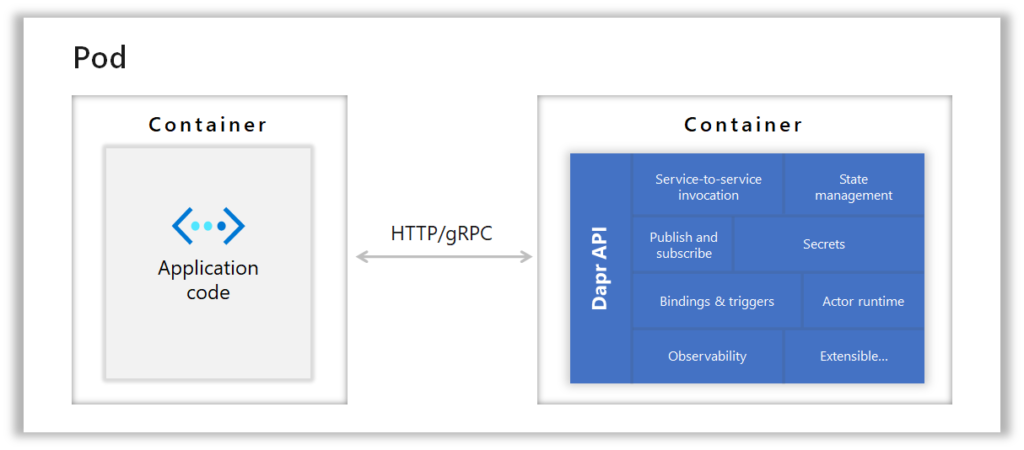

To achieve this, Dapr is built on the sidecar pattern using HTTP or gRPC APIs to communicate with the application code. The general idea is to encode industry best practices in building blocks to simplify distributed application development. Instead of a programming language-specific SDK or library to work with a third-party component, developers use a programming language agnostic Dapr building block. This decouples application code from third-party services and therefore simplifies using different programming languages.

.

How does it work?

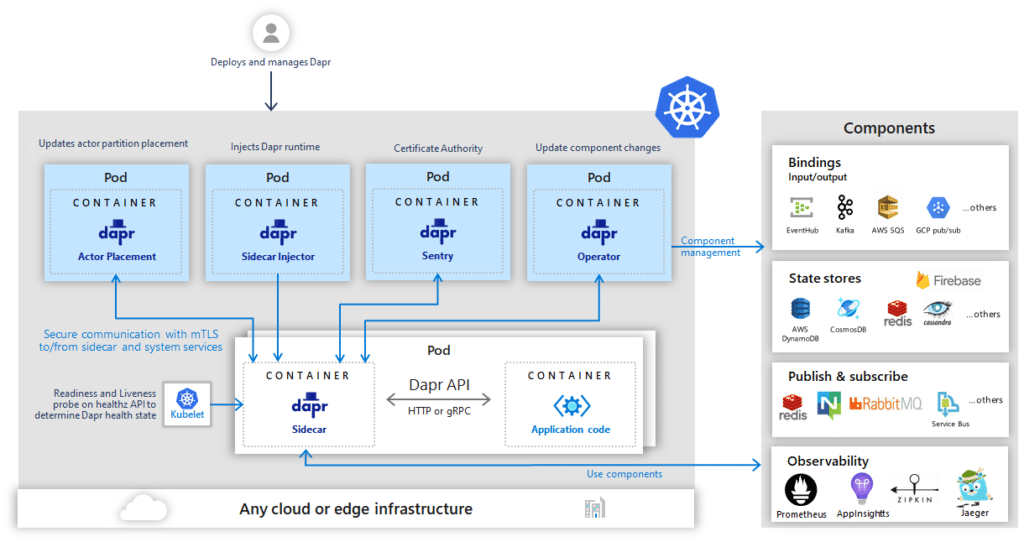

As already mentioned, Dapr consists of various building blocks that run as a sidecar alongside the application. Each building block specifies a behaviour and API that is implemented by Dapr components. Therefore, building blocks are the specification, whereas components are the actual implementation. This allows it to have several implementations for each building block, which can be swapped out without affecting the application. This is possible because the application only sees and implements the Dapr API, without knowing of the actual implementation of the building block. As a result, swapping out third-party dependencies can be done in a declarative way. The only thing that changes is the YAML file that specifies the third-party components behaviour.

In Kubernetes, the sidecar providing the Dapr functionality runs a separate Container in a Kubernetes Pod. For those, that are familiar with Service Meshes, this should sound familiar. The general concept of using a sidecar to extend the functionality of an application is similar between Dapr and Service Meshes. However, in contrast to Service Mesh sidecars, which are transparent to the application, Dapr sidecars are meant to be explicitly invoked by the application via HTTP or gRPC.

.

Building Blocks Overview

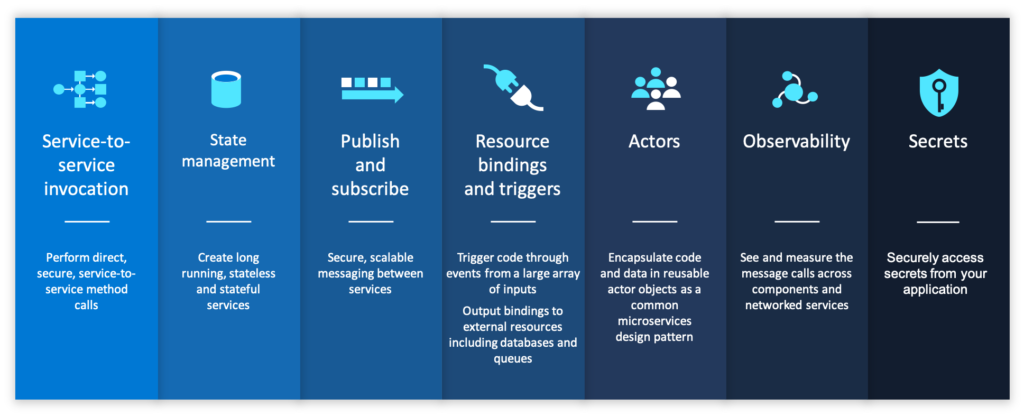

Dapr building blocks are pluggable and opt-in, so cloud-native developers can choose only the functionality they need. They provide the functionality to build resilient, event-driven and stateful distributed applications without the complexity of implementing it yourself.

.

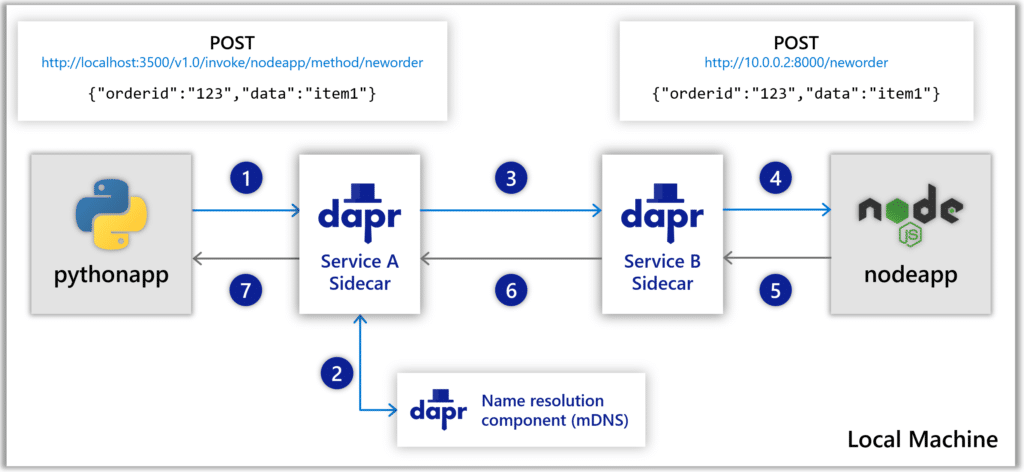

Service-to-Service Invocation

The service-to-service invocation building block provides distributed applications with service discovery and service invocation. As can be seen in the following example, the python app can invoke a service by simply calling an HTTP endpoint via POST with the data to invoke the method in the node app. In this example, Dapr takes care of service discovery, service invocation, security, handling of retries, dealing with transient errors and even distributed tracing, all without adding a single line of code to the python or node app. In short, Dapr provides developers with an endpoint that acts as a reverse proxy with built-in service discovery and additionally offers distributed tracing, metrics, and error handling.

.

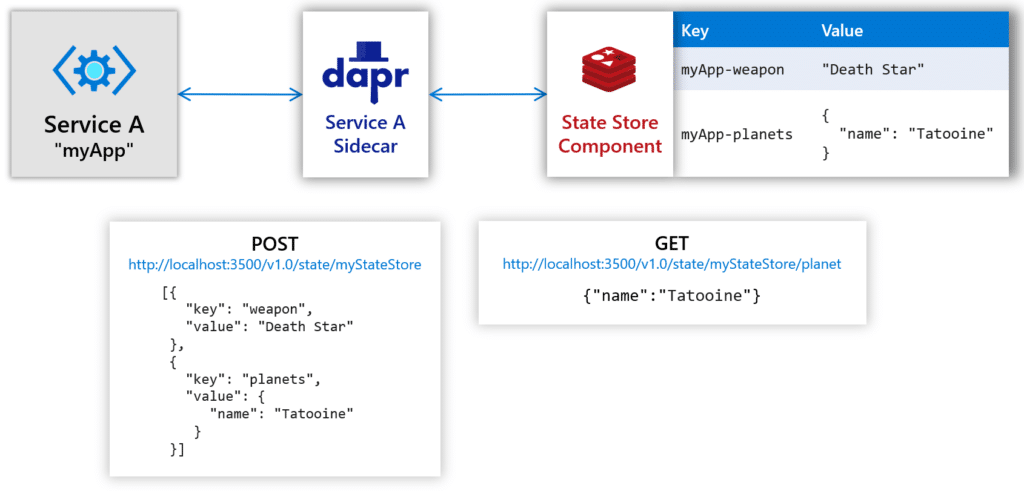

State Management

The state management building block offers Key/Value storage APIs to communicate with third-party state stores. To store data in a state store component, the myApp application only needs to send a POST request to the Dapr sidecar with the name of the state store, myStateStore in the example below, and the data in a key/value format in JSON. Retrieving data is done in an equivalent way by sending a GET request to the Dapr sidecar. Again, the state store must be specified, and in this case additionally the key to the data, which is planets. This sounds trivial at first, but Dapr additionally handles distributed concurrency, data consistency, retry policies and even bulk CRUD operations. All this is provided via an easy-to-use to use API without the need to add or learn a third-party SDK to the application.

Publish and Subscribe

The publish and subscribe building block enables distributed applications to communicate asynchronously via Messages. This pattern is particularly useful for decoupling microservices from each other. Traditionally, however, this creates a dependency on a message broker or queuing system that manages topics where applications publish messages or subscribe to receive messages. In addition, there is a dependency on a specific third-party component and code in each application to interact with it. With Dapr, this coupling to a specific pubsub component is removed, making the application more portable. Furthermore, Dapr offers an at-least-once delivery guarantee, consumer groups with multiple application instances and topic scoping. Again, this is provided without adding code or new SDKs to the application.

More

A complete overview over all building blocks and their technical details can be found in the Dapr Docs building blocks section.

.

Developing with Dapr

To start developing with Dapr you should head over to the Dapr Docs getting started section. Dapr provides you with an easy-to-use CLI and can be run in self-hosted mode or in Docker containers. Thus, setting up a development environment is simple and done in minutes. To set up Dapr you only need to run a simple:

dapr initThis gives you a Redis container instance for state management and publish and subscribe, a Zipkin container instance for observability, a default components folder holding component definitions for the Redis and Zipkin containers and a Dapr placement service container instance for actor support. So, with a single command, you are ready to go. Now starting an application with a Dapr sidecar is as simple as running the following line of code. In detail, it says to run a Dapr sidecar on port 3500 alongside the application myapp, which must be defined in a components YAML file in advance.

dapr run --app-id myapp --dapr-http-port 3500Furthermore, Dapr provides developers with several SDKs to simplify interaction with Dapr in the respective language. However, the SDKs are completely optional and only a convenience layer above the HTTP or gRPC API.

Now to see how this works in practice, check out our Dapr-demo on GitHub. It demonstrates the use of the service invocation, state management and publish & subscribe building blocks in a polyglot microservice architecture.

.

Conclusion

We think Dapr is an exciting technology and the next evolutionary step for cloud-native development after Service Meshes. It has the potential to simplify the development of distributed applications and finally make polyglot microservice architectures a viable choice. So, in conclusion, if you are a distributed application developer, you should check out Dapr!

Many thanks to all contributors who make this open source project possible at all!